My weekend feed was flooded with discussions about a GitHub repo called WiFi-DensePose. 16,900+ stars. MIT licensed. Published on PyPI. Docker images ready. The promise? Using ordinary WiFi signals to track human 3D pose through walls — no cameras, no sensors, no wearables. Just your router.

I couldn't not pull the thread. And what I found sharpened a question that has existed in my mind for a long time: if AI can produce codebases that pass every quality check but solve nothing underneath, what does "good engineering" even mean?

This could be one of the most polished vibe coding hoaxes the open source world has witnessed.

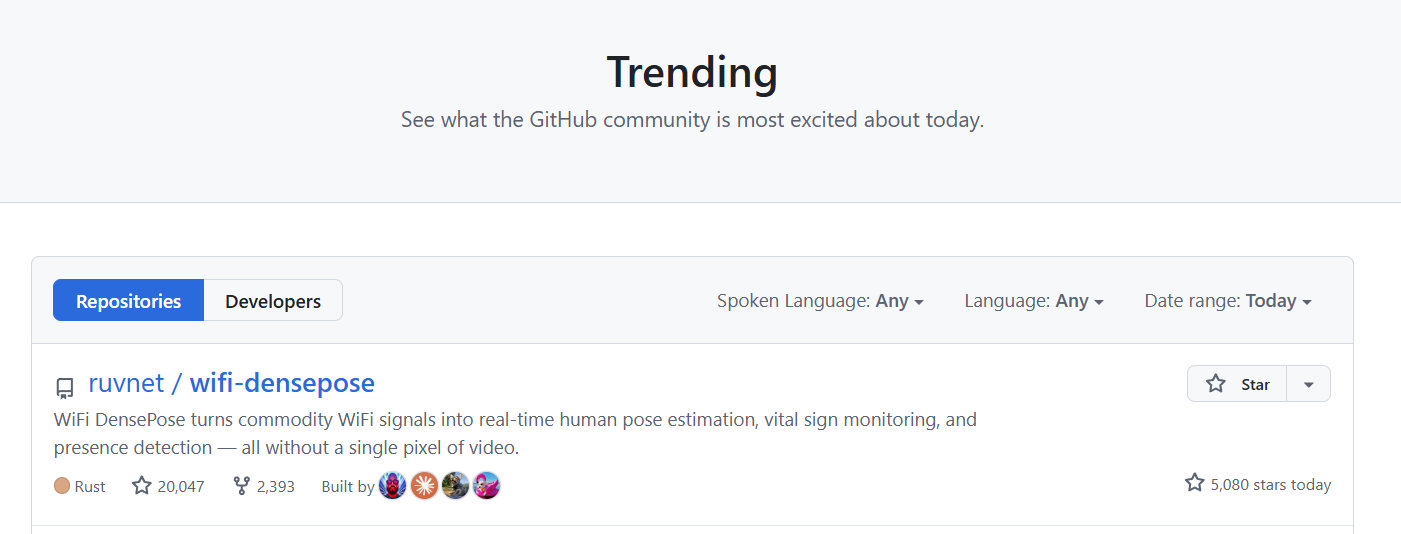

Figure 0: The repository reached over 20k starts shortly after I first posted this article, with an additional 5k within hours. This screenshot serves as evidence that the wording is, at least for now, clearly MISLEADING.

Figure 0: The repository reached over 20k starts shortly after I first posted this article, with an additional 5k within hours. This screenshot serves as evidence that the wording is, at least for now, clearly MISLEADING.

A Textbook Architecture

The early version one consisted of pure Python with a complete signal processing pipeline for cleaning, denoising, and extracting Channel State Information features. One full pass took approximately 15 milliseconds.

Version two rewrote the core signal processing pipeline entirely in Rust. According to repository benchmarks, processing time dropped from 15 milliseconds to 18.47 microseconds — an 810x speedup, pushing theoretical throughput to 54,000 frames per second.

The architecture follows a clean dual-stack pattern: Python handles REST API and WebSocket layers for external consumers, while Rust manages heavy compute. The interface boundaries remain crisp — elegant separation of concerns. This is textbook high-throughput data pipeline design.

A Brilliant Dev Team

Unpacking the source code and accessing the hidden .claude/ directory reveals something unexpected — a multi-agent AI swarm. A coordinated network of AI agents with clearly defined roles:

.claude/agents/

├── analysis/ # code review & quality analysis

├── architecture/ # system architecture design

├── consensus/ # consensus mechanisms

│ ├── byzantine-coordinator.md

│ ├── gossip-coordinator.md

│ └── raft-manager.md

│ └── quorum-manager.md

├── core/ # planning, coding, testing

└── devops/ # CI/CD & operations

These AI agents coordinate code changes using Raft consensus, Gossip protocols, and Byzantine fault tolerance — distributed systems primitives typically reserved for database replication and blockchain consensus. They execute their own code reviews and manage CI/CD pipelines.

This 16,900-star project was almost entirely produced by an AI-simulated software company. From a pure agent orchestration perspective, this is genuinely fascinating — how multiple AI agents coordinate and divide labor is a real engineering achievement.

But architecture is not product.

The Missing Core

The AI agent team constructed flawless scaffolding — API layers, Docker packaging, streaming infrastructure, hardware abstraction. Every surface-level quality signal indicates production-readiness.

But follow the call chain downward to where Channel State Information matrices should transform into human body coordinates and you find mock data. The system includes a built-in Mock Server. When no real CSI-capable hardware connects — which describes most users, since consumer WiFi hardware typically doesn't expose CSI — it operates with deterministic, pre-generated signals. Output coordinates remain synthetic. The documentation confirms: in simulated mode, data is generated from a deterministic reference signal.

A GitHub issue from a developer who resolved dependency errors documented discovering the system simply output randomly generated coordinates in MOCK_HARDWARE mode. The actual CSI processing logic for real hardware was "generic or missing."

Another issue flagged stars jumping from 1,300 to 3,000+ overnight with zero new commits across six months. On Hacker News, users noted the project's internal review concluded it was a "sophisticated mock system with professional infrastructure" where "core functionality requiring WiFi signal processing and pose estimation is largely unimplemented."

The creator's GitHub bio states projects exist "one step removed from reality."

A Reality Check from Physics

WiFi-based human sensing is genuine, published, peer-reviewed science — not fiction.

CMU's DensePose From WiFi (Geng, Huang & De La Torre, 2022) established foundational work by analyzing Channel State Information — per-subcarrier amplitude and phase data describing WiFi signal propagation. When human bodies move through signal paths, they alter multipath propagation patterns mappable to 2D/3D pose coordinates through appropriate neural network architecture.

But the gap between the research setup and what this repository implies is enormous.

The CMU research utilized 3 dedicated WiFi transmitters and 3 aligned receivers with research-grade network interface cards, capturing CSI across 30 subcarrier frequencies and 9 antenna pairs at 100 samples per second. The neural network trained on paired WiFi and video data in controlled environments with 1–5 people. The researchers themselves acknowledged their model "remains restricted by limited public training data" and struggled with limb detection reliability.

Consumer routers expose RSSI — Received Signal Strength Indicator. That's one number per access point. Not 56+ complex-valued subcarrier measurements per frame. The project's own FAQ confirms this: consumer WiFi exposes only RSSI, not CSI.

Even with proper CSI hardware, the challenges are formidable. A 2024 comprehensive survey documents fundamental obstacles: clock asynchronism between transmitter and receiver introduces time-varying random phase offsets; variable gain circuits cause random amplitude fluctuations; multi-device CSI alignment remains unresolved. Another study found that environment dependency means models trained in one setting frequently fail elsewhere, with researchers noting "the path towards a truly environment-independent framework is still uncertain."

Closing: The Broken Bar

I'm not writing this to dunk on one repo. I'm writing it because WiFi-DensePose is a near-perfect case study for something every technical person needs to understand about the current moment.

Vibe coding has figured out how to exploit our trust heuristics.

For decades, we've used certain signals to evaluate software quality: clean architecture, comprehensive tests, good documentation, CI/CD pipelines, Docker images, PyPI packages, GitHub stars. These signals used to correlate strongly with human effort, expertise, and care. They were expensive to fake because they required genuine engineering knowledge.

AI agents can now produce all of these signals in hours if not minutes. The entire trust stack — from repo structure to API docs to test suites — can be generated by a swarm of coordinated AI subagents running consensus protocols on code merges. The cost of manufacturing credibility has collapsed to near zero.

This means our evaluation frameworks will be broken. Stars don't mean vetted. Tests don't mean tested against reality. Architecture doesn't mean the hard problem is solved. Documentation doesn't mean someone understood what they were documenting.

For engineers: the only reliable signal left is at the boundary where software meets the physical world. Can it process real data? Does it solve the actual hard problem? Does the math work? That's where AI-generated code consistently fails — not in the scaffolding, but in the substance.

The irony isn't lost on me — I use vibe coding every day. Scaffolding K8s configs, vibe-checking firmware interfaces, even drafting automation toolings for work. It's genuinely powerful, and I'd be a hypocrite to pretend otherwise.

But there's a difference between using AI as an accelerator on problems you understand and letting it generate an entire codebase around a problem nobody actually solved. WiFi-DensePose is what happens when the second case meets a star-driven ecosystem that can't tell the difference.

The tools are getting better. The question is whether our ability to evaluate what they produce is keeping up. Right now, it seems not.

That said, I have to admit — the execution behind this repo is genuinely impressive. Strip away the hype and what remains is a well-orchestrated AI agent pipeline that produced enterprise-grade scaffolding in no hours. What if that same capability were pointed at something real? For example, the scientific software ecosystem is full of brittle, undocumented legacy packages begging for exactly this kind of refactoring.

Maybe the story here isn't just a cautionary tale. Maybe it's also a glimpse of what's possible when the shell finally meets a real core.

References

- Geng, J., Huang, D. & De La Torre, F. (2022). DensePose From WiFi. arXiv.

- Geng, J. (2022). Dense Human Pose Estimation From WiFi. CMU Robotics Institute, CMU-RI-TR-22-59.

- ruvnet (2025). WiFi-DensePose GitHub Repository.

- WiFi-DensePose (2026). User Guide.

- GitHub Issue #9 (2025). Critical: Broken installation, missing dependencies, and non-functional core logic.

- GitHub Issue #12 (2025). Vibe coded non functional project with fake inflated 3k+ github stars.

- Hacker News (2025). WiFi DensePose: WiFi-based dense human pose estimation system through walls.

- InfoQ (2023). Carnegie Mellon Researchers Develop AI Model for Human Detection via WiFi.

- Liu, J. et al. (2024). Commodity Wi-Fi-Based Wireless Sensing Advancements over the Past Five Years. PMC.

- Cominelli, M. et al. (2023). Exposing the CSI: A Systematic Investigation of CSI-based Wi-Fi Sensing Capabilities and Limitations. arXiv.

- YunPan_Plus (2026). 扒开1.4万Star的WiFi-DensePose源码:教科书般的Rust架构,与AI写的"空壳". 云栈社区.

Disclaimer: AI was used to assist with research, drafting, and grammar polishing. The opinions and conclusions are the author's own and do not represent those of any past or current employer. Feedback and corrections are always welcome.