Figure 1: A (oversimplified) FOMO timeline for agentic framework.

Figure 1: A (oversimplified) FOMO timeline for agentic framework.

If you're like me: You've spent the past year drowning in AI agent tutorials. Every week a new framework drops - LangGraph, CrewAI, AutoGen, n8n workflows - and you bookmarked them all, tried half, finished none. You have 14 open browser tabs and three half-finished projects. Sound familiar?

Here's what I found after burning several weekends going down every rabbit hole: you can close most of those tabs. The industry spent 2025 racing in different directions - building elaborate orchestration layers, experimenting with multi-agent hierarchies, debating whether to use graphs or chains or something called "agentic RAG" - and quietly arrived at the same destination. While we were paralyzed by choice, the builders who actually shipped production agents all converged on the same answer.

If you've been waiting for the dust to settle - it just did. The architecture has been figured out. The frameworks have merged into something simpler. And the fact that you missed the chaotic sprint of 2024-2025 isn't a setback - it's a gift. Let me save you the FOMO spiral and explain where we actually are.

First, What Is an AI Agent?

Before we talk about where we're going, let's make sure we agree on what we're talking about. The word "agent" gets thrown around constantly - often to mean anything from a slightly fancy chatbot to a fully autonomous system taking over your workflow. The reality is both simpler and more exciting.

An AI agent, at its core, is three things: a large language model (LLM), a set of tools, and a loop. Not a chatbot - a chatbot answers questions. An agent does things. It takes actions in the world, observes what happened, and decides what to do next. That loop is the key difference.

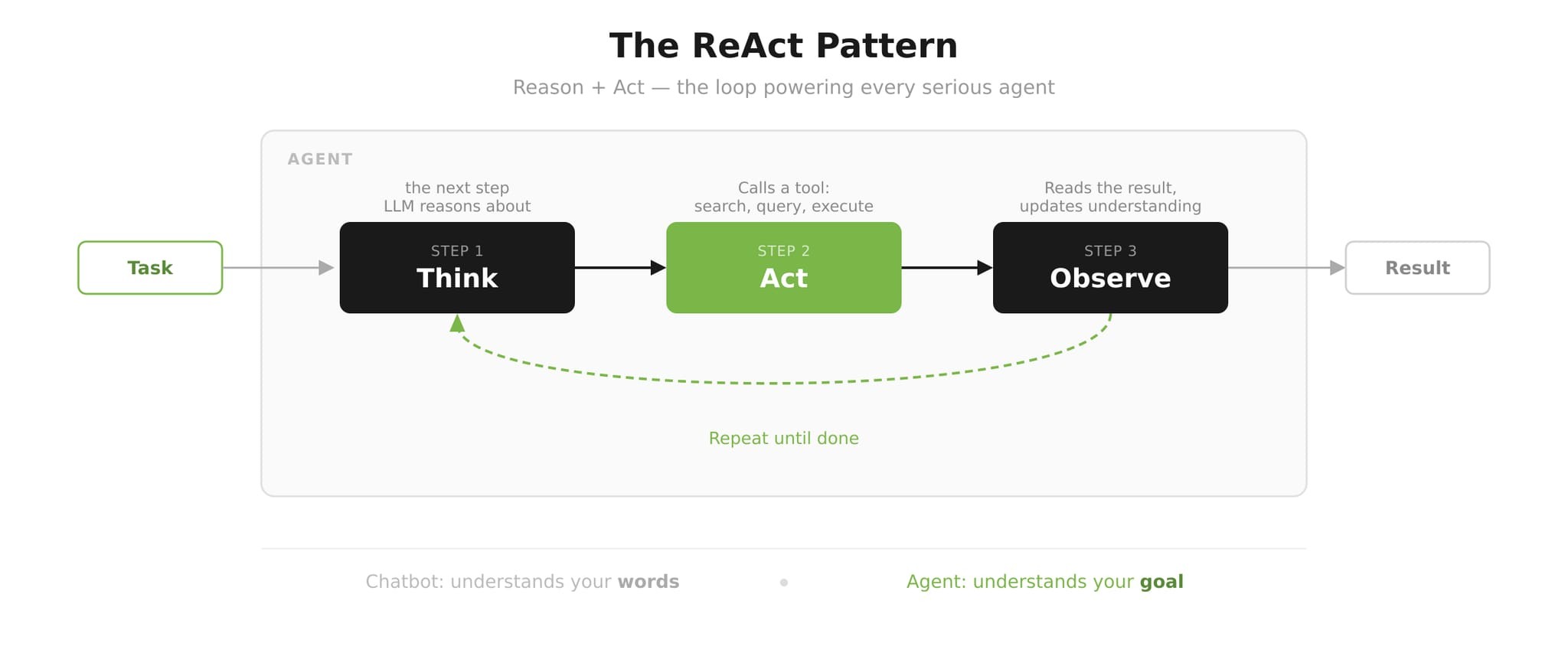

Figure 2: The ReAct Pattern. Based on Yao et al., 2022.

Figure 2: The ReAct Pattern. Based on Yao et al., 2022.

The foundational pattern powering virtually every serious agent today is called ReAct (Yao et al., 2022), short for Reason + Act. The cycle goes: Think →Act → Observe → Repeat. The model reasons about what it needs to do, takes an action using one of its tools, observes the result, and then reasons about the next step - over and over until the task is complete. It's elegant, it's powerful, and it's been the heartbeat of production agents since the paper dropped.

Here's the clearest way to understand the difference. You say: "Find me cheap flights to Tokyo next month." A chatbot says: "Here's a link to Google Flights!" An agent opens a browser, searches four travel sites, compares 23 flight options, cross-references your calendar to find the best dates, notices you have a meeting on the 14th, and books the cheapest option for the 7th - then sends you a confirmation. One understands your words. The other understands your goal.

How We Got Here: A 2-Year Speed Run

The story of AI agents from late 2024 to late 2025 is a compression event unlike anything in recent tech history. Here's the race, in three phases.

Late 2024 - The Framework Era

The prevailing wisdom in late 2024 was that complex tasks required complex systems. Everyone was building multi-agent orchestration: LangGraph from LangChain, CrewAI, AutoGen from Microsoft. The idea was appealing and logical: split a complex task into specialist sub-agents - a Researcher agent, a Coder agent, a Reviewer agent - and wire them together in directed graphs so each expert could contribute their slice of intelligence.

These architectures looked magnificent in diagrams. They had nodes and edges and beautiful flowcharts. The demos were impressive. But in production? They were deeply fragile. Errors cascaded across agent boundaries. Debugging was a nightmare. The latency was punishing. And the coordination overhead between agents frequently swamped any intelligence gains from specialization.

Practitioners started quietly admitting to each other that their multi-agent pipelines were spending more compute arguing about what to do than actually doing it.

Mid 2025 - The Models Got Too Good

Then something happened faster than anyone anticipated: the models got dramatically better. Specifically, models like Claude got good enough that a single capable model with the right tools simply outperformed elaborate multi-agent pipelines built on weaker models.

Figure 3: A comparison on agentic architectures. Based on several sources discussed in the main text.

Figure 3: A comparison on agentic architectures. Based on several sources discussed in the main text.

The most instructive case study came from Vercel. Their text-to-SQL agent had been carefully engineered with 17 specialized tools - each one handcrafted to handle a specific type of database query or edge case. It achieved an 80% success rate. Then they did something radical: they threw away 15 of those tools and stripped the agent down to just two: bash and SQL execution - raw, general-purpose tools. The result: 100% success rate, 3.5× faster execution, and nearly 40% fewer tokens consumed (Qu, 2025).

"We were constraining the model's reasoning because we didn't trust the model to reason." — Vercel Engineering, 2025

Late 2025 - Convergence

By late 2025, the realization had spread industry-wide. Framework makers started quietly renaming and restructuring. Different brands, different UX - but under the hood, every serious player had landed on the same architecture:

- LangChain pivoted to "Deep Agents"

- DeerFlow 2.0 relaunched from "multi-agent framework" to "SuperAgent harness"

- OpenClaw extended agents into Slack and Telegram - where people actually work

The convergence point: ReAct loop + Middleware + Skills. The race was over. The destination was identical.

The OS Analogy: What This Architecture Actually Is

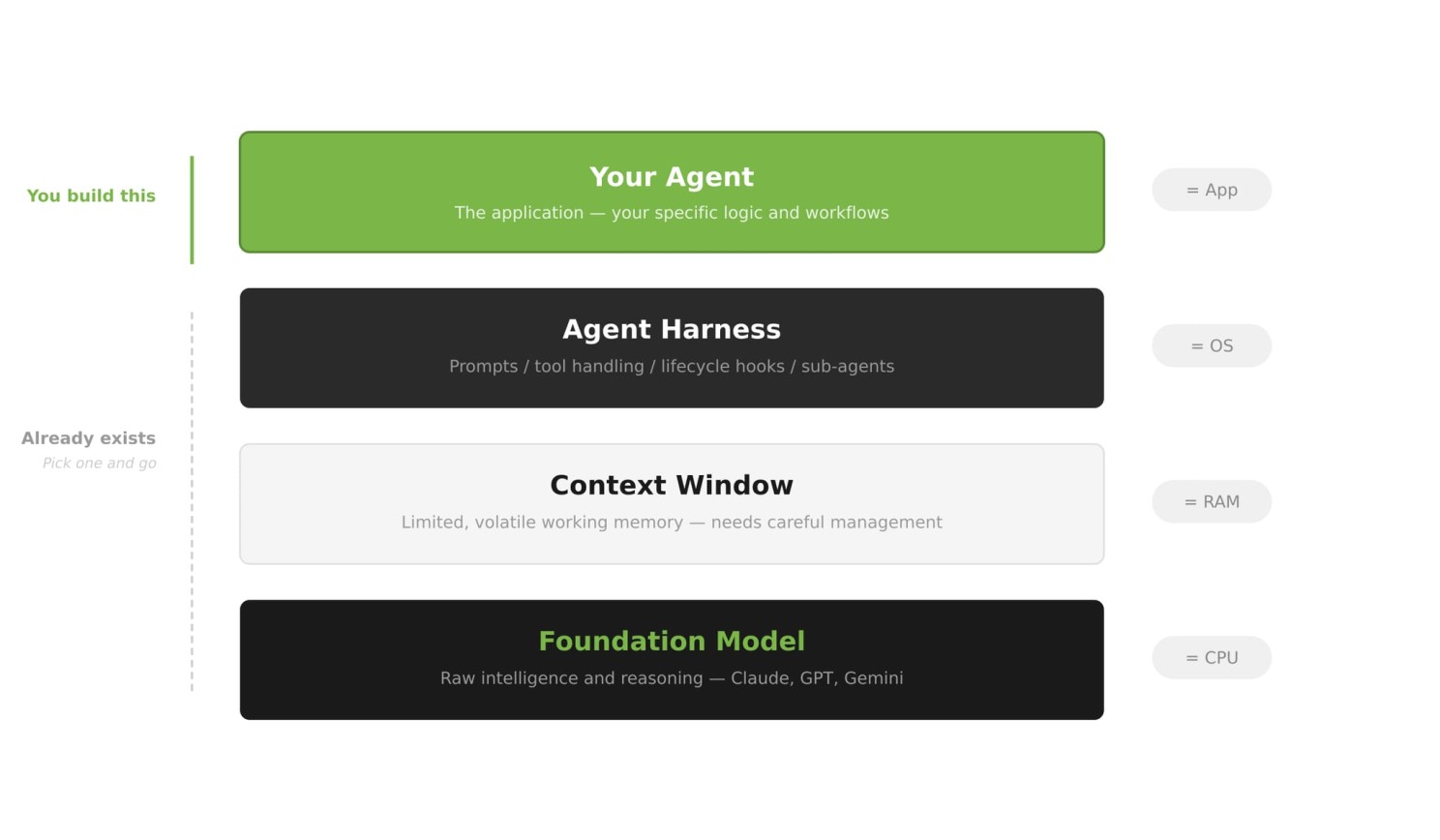

Figure 4: The Agent Stack with a computer analogy. Based on Schmid, 2026.

Figure 4: The Agent Stack with a computer analogy. Based on Schmid, 2026.

If you're new to this, all the terminology can feel overwhelming. Let me give you an analogy that makes it click, courtesy of Phil Schmid (2026). Think of an AI agent system like a computer:

- Model = CPU - the raw processing power, the thing actually doing the reasoning. Swap in a better model, you get smarter reasoning.

- Context Window = RAM - your working memory. It's limited, volatile, and what you put in it determines everything you can currently do. When the task ends, it clears.

- Agent Harness = Operating System - the layer that manages everything else: the boot sequence (system prompt), tool integrations (drivers), context curation, and sub-agent coordination.

- Agent = Application - your specific logic, your Skills, your purpose-built behavior running on top of this infrastructure.

This analogy also clarifies the key distinction: framework vs harness. A framework (LangChain, LlamaIndex) gives you Lego bricks - you assemble everything yourself. A harness is a pre-built operating system: prompt presets, tool-call handling, lifecycle hooks, and sub-agent management out of the box. You plug in your logic; the harness handles the plumbing.

You don't build an OS from scratch to run an app. Same deal now.

The hard problem has shifted. In 2024, the question was "how do I wire agents together?" That's mostly solved. The new hard problem is "how do I manage what my agent knows at any given moment?" - which the field calls Context Engineering.

"… context engineering is the delicate art and science of filling the context window with just the right information for the next step." — Andrej Karpathy, 2025

Manus, one of the most demanding agentic systems publicly analyzed, gives you a sense of the scale: approximately 50 tool calls per task, with a striking 100:1 input-to-output token ratio (Ji, 2025). To make this economically viable, they use three core strategies:

- Reduce - compact old results, summarize past observations, prune what the agent no longer needs

- Offload - write intermediate results to the filesystem so they don't have to live in context

- Isolate - spin up sub-agents with their own fresh context windows for self-contained tasks

Anthropic's engineering team, writing about long-running agents in 2026, identified the deepest challenge: each new agent session starts with zero memory - like a shift worker with amnesia (Anthropic, 2026). Their fix: structured external memory - progress files, git history, and standardized handoff documents that give the next session enough context to pick up exactly where the last one left off.

Teaching Your Agent: From MCP to Skills

Here's the part most people building agents miss - and it's the most important shift in the entire field.

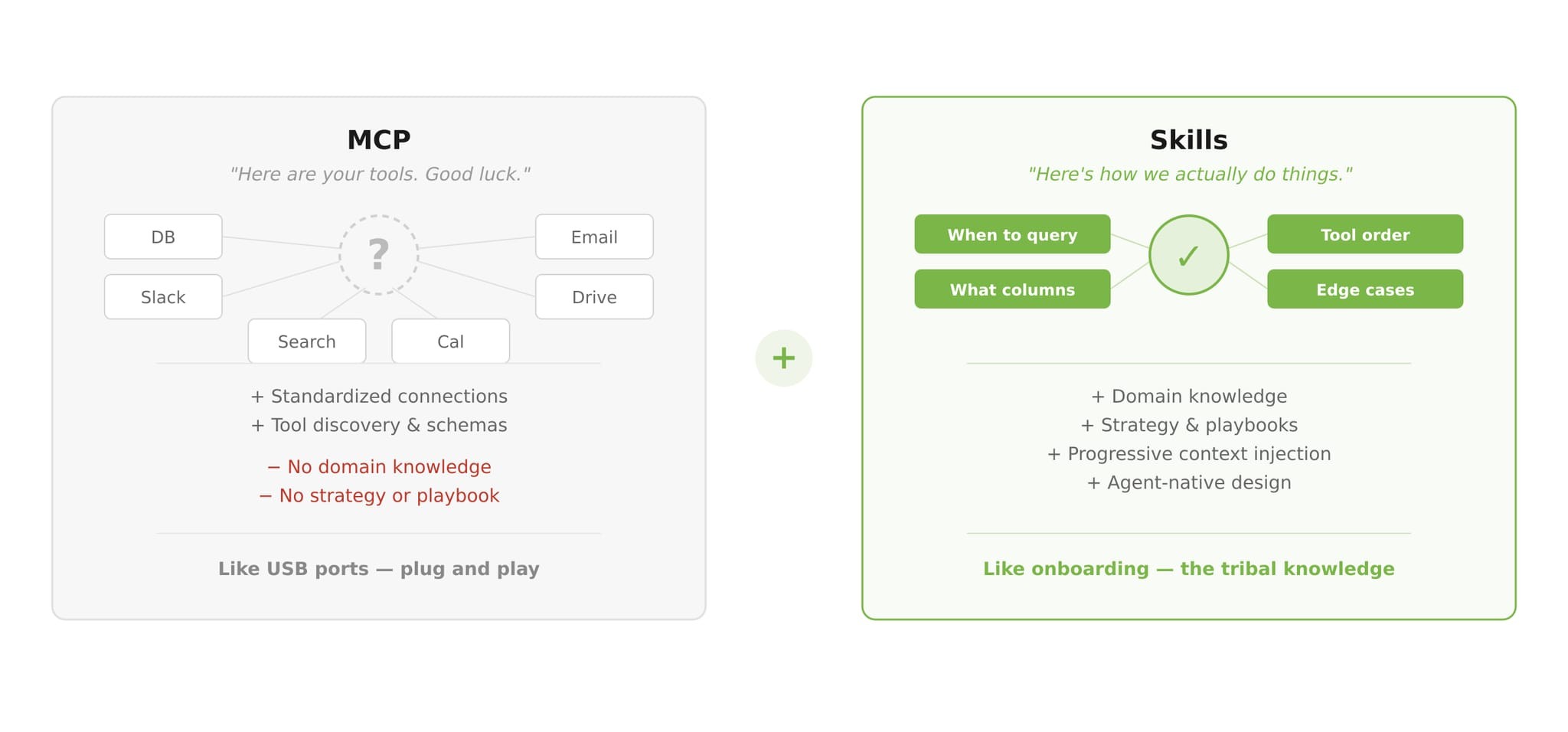

The architecture is free. If you need to build an agent today, you can start with an open-source harness in an afternoon. The heavy lifting is done. The tools are standardized. Anthropic released the Model Context Protocol (MCP) in late 2024 - essentially USB ports for agent capabilities. Want to give your agent access to a database? There's an MCP server for that. File system? Email? GitHub? MCP. Within a year, it became the de facto standard for connecting agents to external tools. The tooling layer is solved.

But here's what nobody tells you: plugging in a tool is not the same as the agent knowing how to use it well.

Imagine you give a brilliant new hire access to all your company tools - Slack, databases, dashboards, CRM, deployment pipeline. Great. Now watch them spin for three days not knowing what to do, which tool to use when, in what order, what the edge cases are, or how to interpret results in your specific context. Access without knowledge is paralysis.

That's where Skills come in - formalized in Claude's late 2025 update, but implicit in every successful agent deployment before that. A Skill isn't just an API endpoint - it's domain knowledge packaged for an agent. It tells the agent when to use a tool, in what order, what to watch out for, how to interpret ambiguous results, and what "done" looks like. Skills are injected into context progressively, on demand, designed from the agent's perspective rather than the human's.

MCP is giving the new hire access to the tools. Skills is the onboarding - the playbook, the tribal knowledge, the "here's how we actually do things around here." One without the other gets you a capable person sitting at a desk, staring at a screen, waiting for someone to explain what they're supposed to be doing.

Figure 5: A comparison between MCP and Skills - with a new hire analogy.

Figure 5: A comparison between MCP and Skills - with a new hire analogy.

This is the Bitter Lesson (Sutton, 2019) playing out in real time. Richard Sutton's famous 2019 essay argued that in AI research, general methods that leverage computation always eventually beat hand-crafted human knowledge. The lesson for agents: stop hand-coding elaborate, brittle pipelines that encode your assumptions about how a task should be solved. Start teaching agents the knowledge they need, and trust them to figure out the execution.

The Vercel case proved it empirically. Schmid's "Build to Delete" principle reinforces it philosophically: design everything modularly, and assume that the next model upgrade will make your cleverest piece of custom code unnecessary. Because it will. The agent's job is to reason. Our job is to make sure it has the right knowledge to reason with.

What Actually Matters Now

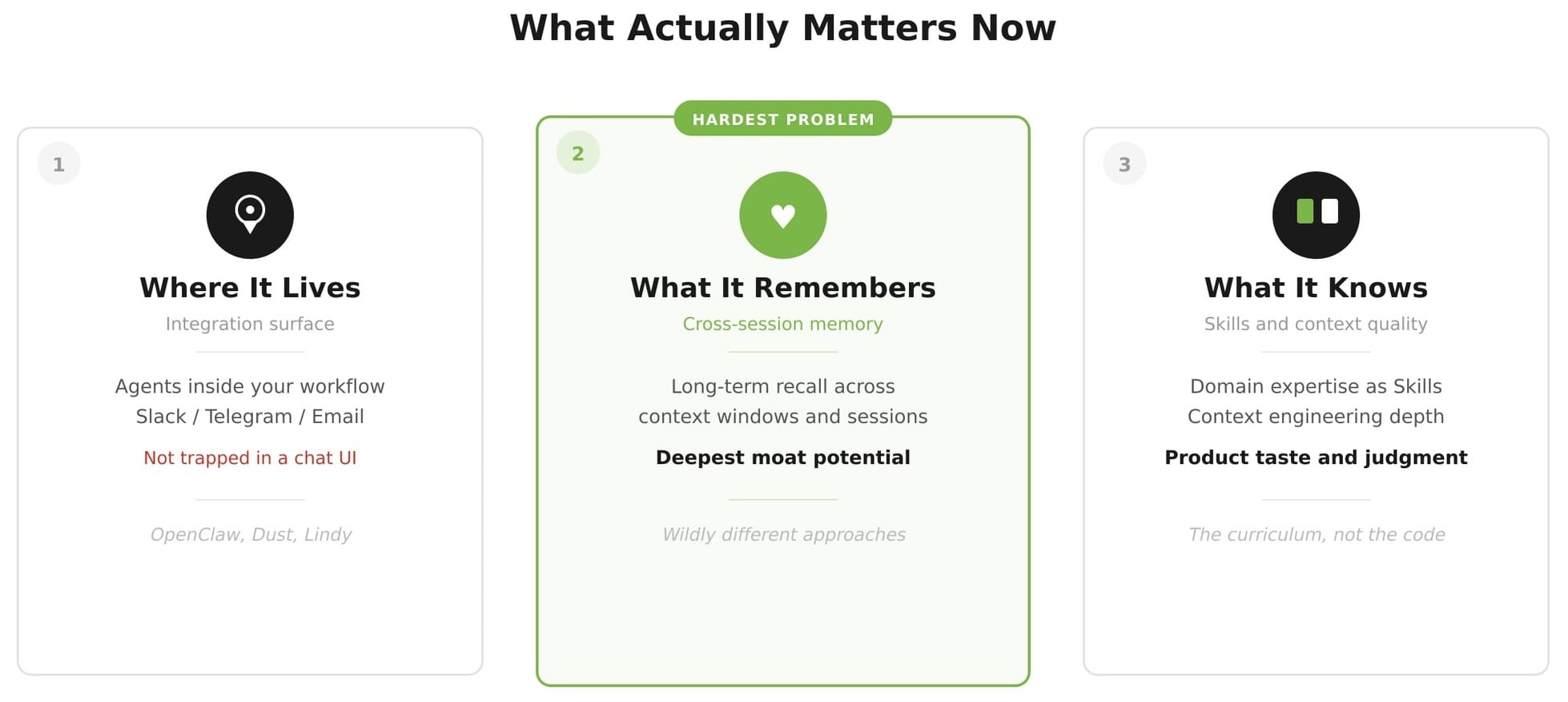

Figure 6: Three main dimensions of competitive differentiation in production agent systems.

Figure 6: Three main dimensions of competitive differentiation in production agent systems.

So we're starting fresh. We've closed most of our tabs. We've picked a harness. Three things remaining now separate the agents that win from the demos that die in prototype:

-

Where the agent lives. An agent trapped in a demo chat UI gets used twice and forgotten. An agent embedded inside your actual workflow - your email, your Slack, your Telegram - gets used every day. The interface is not cosmetic. It determines whether the agent becomes a habit or a curiosity. OpenClaw's bet was putting agents where people already work, and it turns out that bet was right.

-

What the agent remembers. Cross-session memory - the ability to remember what happened yesterday, last week, and in last month's project - is the hardest unsolved problem in production agent deployment, and the deepest competitive moat (Anthropic, 2026). Anyone can spin up a capable agent for a single session. Very few can build one that gets smarter the longer you work with it.

-

What the agent knows. Skills, context, taste. The agent that wins won't necessarily run the biggest model or the most sophisticated architecture. It will be the one taught well - whose Skills encode genuine domain expertise, whose context engineering is thoughtful, whose prompts reflect hard-won lessons from real usage. This is the curriculum problem, and it's wide open.

If I were starting today: pick a harness, learn context engineering, invest seriously in Skills. The architecture is a solved problem. The curriculum isn't. And whoever figures out the curriculum first will build something the architecture alone could never explain.

The FOMO was real. But chill, we didn't miss the race - we missed the chaotic sprint that led everyone to the same starting line. We get to begin there.

I guess that's not late. That's lucky 🍀

References

- Yao, S. et al. (2022). "ReAct: Synergizing Reasoning and Acting in Language Models." arXiv:2210.03629

- Sutton, R. (2019). "The Bitter Lesson." incompleteideas.net

- Anthropic (2025). "Building Effective Agents." anthropic.com

- Qu, A. (2025). "We Removed 80% of Our Agent's Tools." Vercel Blog

- Ji, Y. (2025). "Context Engineering for AI Agents: Lessons from Building Manus." manus.im/blog

- Schmid, P. (2026). "The Importance of Agent Harness in 2026." philschmid.de

- Anthropic (2026). "Effective Harnesses for Long-Running Agents." anthropic.com/engineering

- Karpathy, A. (2025). On context engineering. X/Twitter

- LangChain (2026). "Context Management for Deep Agents." blog.langchain.com, 2026.

- ByteDance (2026). "Improving Deep Agents with harness engineering." github.com/bytedance/deer-flow

- OpenClaw (2026). openclaw.ai

Disclaimer: AI was used to assist with grammar polishing and rhetorics refining. The opinions and conclusions are the author's own and do not represent those of any past or current employer. Feedback and corrections are always welcome.